Mobile eye tracking glasses enable real-world measurement of attention, gaze, and cognition outside labs. Modern devices vary in accuracy, usability, and recording capacity. Combined with biosensors like GSR, EEG, and facial analysis, they provide deeper insight into perception, emotion, and behavior. Learn how to choose the best eye tracking glasses for your research.

Table of Contents

In striving to move data collection from the lab and into the wild, eye tracking technology companies have been continually evolving their devices to be more accurate and robust. Eye tracking solutions becoming more mobile allows subjects not to be tethered to a screen and enable more ecological validity in research designs.

Eye tracking glasses, where the eye tracking technology is built into a pair of glasses and worn by the user instead of mounting to a screen, give researchers the opportunity to collect data such as attention, gaze patterns, and saliency to gain insights into visual perception, and cognitive performance, across a variety of research domains.

(Image courtesy of University of Connecticut School of Engineering)

Common applications for mobile eye tracking research include marketing, sports performance, healthcare, and medical training. While the technology driving these tools can be quite advanced, with the right tools, such as the analytics platform provided by iMotions, researchers are finding it easier than ever to leverage eye tracking glasses to gain insight into how people are allocating attention.

Furthermore, insights into visual attention can be combined with other sensors to better detect human states in more ecologically valid environments. This approach can include using Galvanic skin response and ECG data to gain insights into how emotionally relevant certain visual targets are in testing areas, such as a store, medical office, or training simulator.

Additionally, metrics like EEG can be combined with glasses to understand complex cognitive states such as workload or drowsiness at an electrophysiological level.

iMotions has been specializing in helping researchers move beyond signal data and into multi-sensor applications in order to help our clients understand emotional responses. Simply put, iMotions helps you add the understanding of “How” and “How much” of the emotional story, to the “Where” story of eye tracking.

This article reviews the latest in mobile eye tracking by comparing different hardware options for eye tracking glasses, and presents some ways to gather research insights thanks to the latest advancements in eye tracking glasses technology.

Hardware Review

When selecting a pair of eye tracking glasses, you must consider device specifications in accordance with your research parameters such as:

- Sampling rate

- Accuracy

- Recording limits

- Ease of use

- Data output

- Overall operating cost

The first and most often overlooked question is the need for accuracy, which is related to the size of the target areas of interest (AOIs) and the range of viewing distances in the study.

If the objective of the study depends on detecting attention between two small objects farther away, then you will need a device featuring a sufficient level of precision, usually reflected as an accuracy score in degrees of visual angle.

While a comprehensive review is out of the scope of this article, below is a review of some of the stand-out features of many of the eye tracking options available today. In addition, iMotions experts are here to help in all things mobile eye tracking, so feel free to reach out for a free research consultation on what the best hardware will be for your research objectives.

Let’s talk!

Schedule a free demo or get a quote to discover how our software and hardware solutions can support your research.

Eye tracking hardware comparison summary review

*(opinions offered reflect the personal views of the author, and are not an official position of iMotions)

Argus Science ETVision System

The ETVision Glasses hail the exciting return of veteran eye tracking technology pioneers Argus Science, formerly named (ASL). Standout features of the brand new ETVision system include a 180Hz eye measurement, built-in two-way audio communication, and access to the camera feed from all the cameras, which allows for easier adjustments during setup.

The exchangeable scene lens located on the bridge provides a 720p resolution image with a field of view of 96 degrees. The controller unit contains an internal battery that is reportedly able to continuously record for over 5 hours. Although, it should be noted that recording times are limited to the size of the storage SD card which is estimated to be able to hold up to 2 hours of data for a standard 128GB card.

Pros: Battery life; eye tracking accuracy.

Cons: Lower resolution front camera; More powerful computer recommended

Pupil Labs Core and Invisible

Pupil Labs was founded in 2014, so they are relative newcomers to the field, but they have debuted with two attractive offerings: the Pupil Core and then later with Pupil Invisible.

The most obvious standout feature is the price relative to performance, with these units being pretty affordable compared to their competitors. The first model, the Pupil labs Core, was hailed for its flexibility and adaptability with features such as adjustable eye tracking sensor position and open source files to 3D print mounts for custom headsets.

The Core still has a strong following in the Research & Development (R&D) community. However, the main drawback was the lack of usability for mainstream users as well as no pre-processing of the eye tracking data, thus requiring more CPU power to run than competitors. However, many of these issues were addressed with their follow-up device, the Pupil Labs Invisible.

Pros: Hardware adjustability, cost,

Cons: Durability, High CPU load

Their newest model, the Pupil Labs Invisible, presents a more polished product with unrivaled ease-of-use functionality, especially thanks to features such as the magnetic temple mounted scene camera and calibration-free data collection.

The main advancement of the Invisible over the Core model was the inclusion of a smartphone device to serve as a power, storage, and UI via USB type C cable. This feature allows users to more easily collect data, sync files to the cloud, and review the collected data.

The reported recording time is 150 minutes off a single charge, and the included a mobile phone offers up to 8 hours of storage on the SD card mounted on the phone (although your mileage may vary). Optional extras include a prescription lens kit that can accommodate from -3 to +3, and a head strap (which I highly recommend for added stability in the field).

Pros: Cost, ease of use, normal form factor.

Cons: slightly biased eye-tracking accuracy compared to other offerings; Lower spec camera resolutions.

Check out our specs for Pupil Labs Invisible

Viewpointsystem VPS 19

Viewpointsystem represents the newest supported mobile glasses device to the iMotions platform. The VPS 19 sports a more industrial design more suited for healthcare, sports, and industrial applications and features a Full HD 13MP camera located on the bridge with built-in status indicators.

The Glasses are connected to their Smart Unit, which presents information on a very user-friendly 4.3” inch multi-touch display with support for 2 USB-C for I/O when you need to move data. The batteries are hot-swappable for continuous uninterrupted data collection, which is a plus for long recordings. Storage is handled through 64GB of flash memory integrated into the unit.

Other features of note include speakers built into the glasses and the smart unit for communication. Viewpoint is also developing a “Click on” Mixed Reality device that can be added for eye tracking in MR applications.

Pros: High overall build quality; Best functionality of their Smart Unit; Hot-swap power.

Cons: Lowest storage space;

Check out our specs for VPS 19

Turning Mobile Eye Tracking data into insights

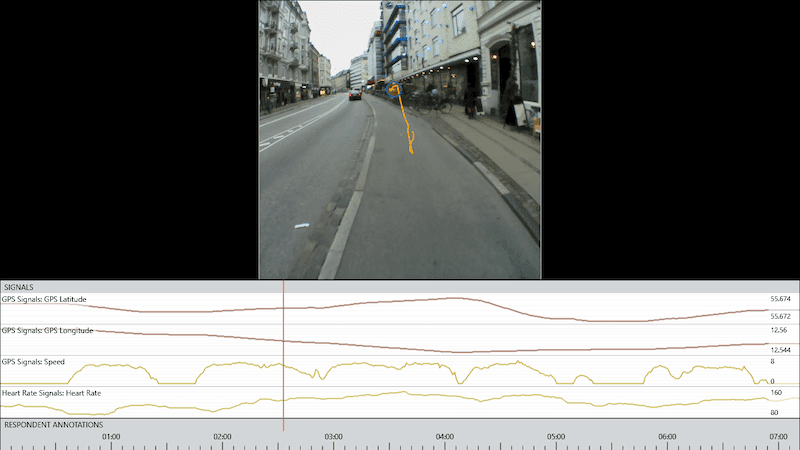

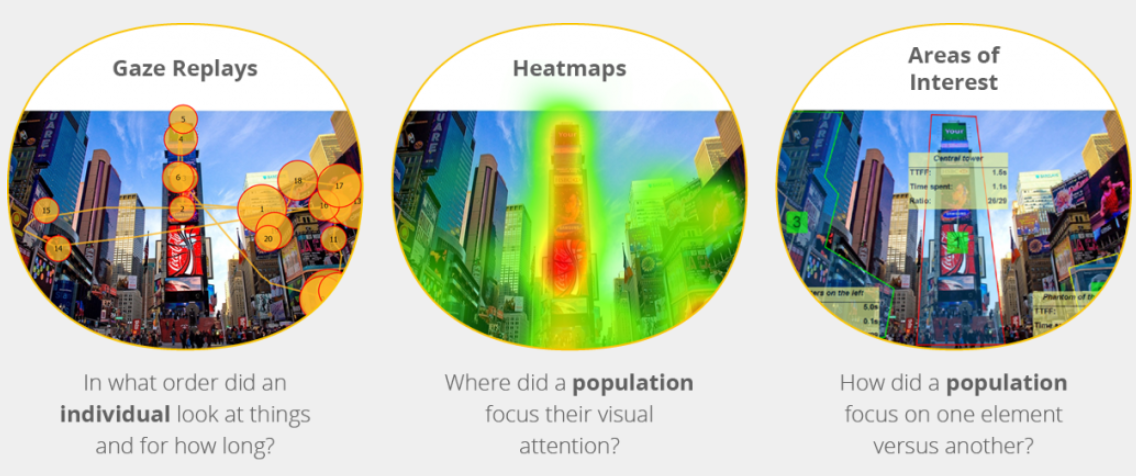

Researchers leverage many analysis tools and methods to convert gaze replay data from eye tracking glasses into actionable insights, including but not limited to: gaze plots, heat maps, and combining with other sensors such as facial expression detection, GSR/EDA, or heart rate.

Gaze plots show the history of one individual’s experience as they visually explore a scene. Typically displayed as a series of lines and circles, gaze plots provide an understanding of where a person was looking in the moment.

This recording can shed light on what a person paid attention to, in what order, for how long, and whether they revisited certain areas within the field of view.

However, matching locations in the recording can be a tedious task of going frame by frame and identifying the object being looked at (Manual Areas of interests (AOIs)). This process can be automated and accelerated with AI-driven advances such as the iMotions Gaze Mapping tool.

The iMotions Gaze Mapping tool uses state-of-the-art computer vision algorithms to recognize a particular object or scene, and then aggregates the data from the dynamic recording into a single image that describes the subject’s entire interaction with that object. The result allows the user to turn a dynamic glasses recording into easier to analyze static images.

Mobile Eye tracking data is further enhanced when paired with multiple sensors such as Facial Expression Analysis (FEA), Galvanic Skin Response (GSR), and Electroencephalography (EEG) to understand the full, implicit response of a study participant. While tracking visual attention patterns can account for many interesting insights into behavior and the underlying cognitive process therein, it is only when paired with additional data that a deeper understanding can be reached.

Just because a person spent time looking at a particular object doesn’t answer how they were feeling when looking at it. Did it excite them? Were they confused by what they were seeing?

How big a feeling was it? Here, data from tools like Facial expression analysis, or galvanic skin response can contribute to these results by providing indications of an emotional response, and the magnitude of that emotional response. By automatically synchronizing the data collection process from these multiple tools, iMotions can drive research advancement from these combined sensor results faster and more precisely than ever before.

Read more: Introduction to Multi-Sensor Research

Eye Tracking Glasses

Compare the best in market

- Compare the specifications of each hardware

- Learn more about eye-tracking metrics

- Finding the right equipment for

your research