This article was written in 2021 and is no longer up to date. For a full product overview please refer to our products page.

For a tech company that is fast approaching 20 years in the market, iMotions has built quite an ecosystem of software offerings, and in this blog, we will provide an overview of how far we have come in almost two decades. In 2005, the question of what iMotions does was a fairly easy question to answer – an eye tracking company with big ambitions. Sixteen years later, the company, expertise, and offerings have grown with and beyond eye tracking to now encompass diverse pathways for researchers. With that in mind, we’d like to offer an updated answer to that question, and walk you through all of the possibilities that you can find for behavioral research with iMotions today.

At the very heart of it, iMotions is a software company that provides solutions for the present and future of behavioral research. We enable research to keep pace with the fast-changing world. Whether your research takes place in a carefully controlled lab, on a ship at sea, or in the local supermarket, we have the technology that can help you understand how humans behave in each environment. We know that the challenges facing researchers today aren’t the same as they’ve always been – the pace of research demands flexibility. That’s why we have focused on providing an adaptable system, not just in terms of sensor configurations, but also for where research can happen: anywhere.

The ecosystem of iMotions in 2021 features a few components, each designed in different ways to provide a solution to the human behavior research challenges of today. We offer two main software product approaches to facilitating research: lab-based and remote. Each provides a different direction towards understanding human behavior through technology and is supplemented by our tier of training and services. This includes:

| Lab-based | Remote | Research Enablement |

|---|---|---|

|

|

|

|

|

|

|

|

Lab-based Solutions

iMotions Core

iMotions Core is, quite literally, at the core of iMotions. As the central hub for running behavioral experiments, the Core software is responsible for running the show. Everything that connects to iMotions runs through this central piece of software, providing you with a base from which to set up, organize, and carry out experiments.

Core provides the foundation for executing your research either in person (say, at a lab or in the field), and also helps run the show when running research remotely, through the Remote Data Collection module. While Core is the center of operations, it also offers inbuilt features that can complement any module (and even allow research to take place without additional modules). The ability to carry out fully-editable and customizable surveys is included as standard, as well as the ability to connect virtually anything through our API.

In the past two years starting with iMotions 8.0, we have also had a huge focus on updating the iMotions User Interface to make the software more intuitive and flexible to use. When it comes to exploring and examining data, researchers using iMotions Core can flexibly view the data sources of their choosing, display stimuli as they wish, and adjust signal visualizations according to research parameters. Now in 2021, we’re taking these User Interface improvements and applying them to our eye tracking analysis suite for AOIs (see more below).

iMotions Modules – Data Collection & Analysis

The iMotions modules connect to Core and allow you to carry out research in the way you want – each module is a software that provides you with different data collection and analysis possibilities for different biosensors. For example, collecting facial emotion data can be done with the Facial Expression Analysis module, providing you with the option to gather facial coding data from participants. This can be seamlessly added to by including data from over 50 different biosensors, be it eye tracking, electrodermal activity, EEG, and beyond.

The full list of iMotions modules is shown below – if you’d like to learn more about the complete capabilities of each then reach out to us to learn more!

- Eye tracking – screen-based

- Eye tracking – glasses

- Eye tracking – VR

- Eye tracking – webcam

- Facial expression analysis

- Electrodermal activity (known as EDA, or GSR)

- Electroencephalography (EEG)

- Electrocardiography (ECG)*

- Electromyography (EMG)*

This modular network allows for flexible expansion of your research setup, however it is configured — which is at the center of our product proposition for 2021. We understand that many researchers may want to begin with eye tracking and add modules from there, so this year we are excited to have a continued focus on analysis capabilities for our most popular eye tracking modules, with our new Areas of Interest (AOI) Editor update to iMotions 9.0. Starting in early 2021, iMotions clients can benefit from a revamped UI for setting and analyzing AOIs, tons of improvements for metrics and visualizations, as well as simplified exports. So whether you have gathered eye tracking data in the lab with screen-based eye tracking, on the go with eye tracking glasses, in VR, or now with online studies, you’ve got high-performance software to help you assess visual attention.

*Both the ECG and EMG modules are provided as a single package

R Notebooks

R Notebooks are R-based scripts that can process signals coming from the hardware you use to collect sensor data, and turn them into interpretable data representations. Those data are the presentable and publishable visualizations you see in the iMotions software platform. Instead of seeing strings of large datasets, which represent the raw sensor signals, with the help of R Notebooks, you can see, adjust, and export graphs and decipherable metrics for all our available modules according to your study parameters. R Notebooks are completely transparent with visible methodologies, and they visualize the most popular metrics our users employ on a daily basis. We are constantly updating our R Notebook offerings, with several new ones planned for 2021.

iMotions Remote Capabilities

Remote Data Collection

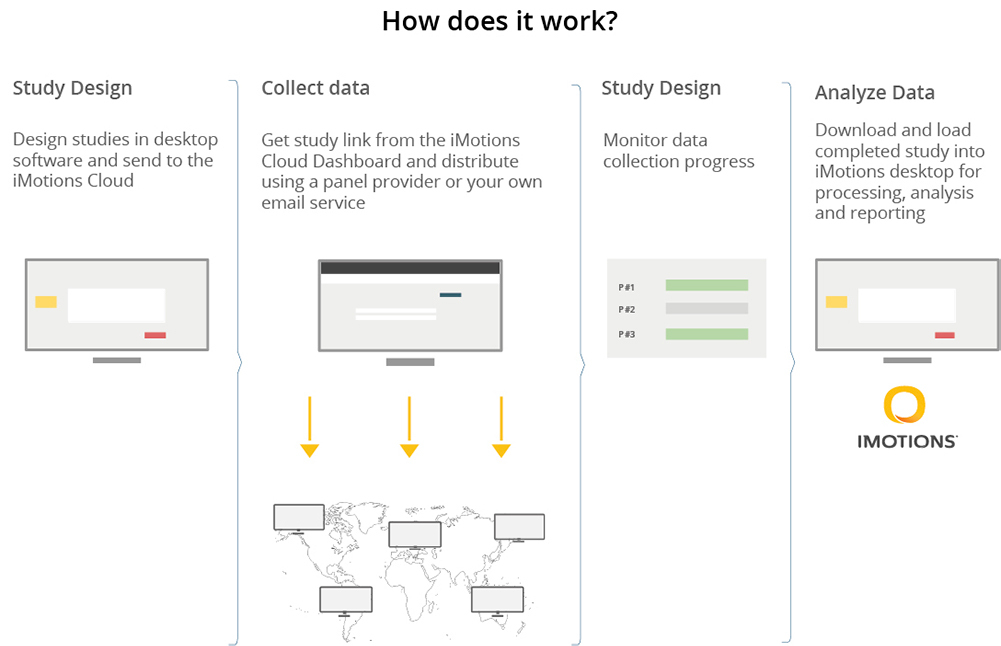

Spring 2021 also sees the introduction of iMotions’ latest offering – the iMotions Remote Data Collection module. The ability to carry out remote data collection is now needed like never before, and the iMotions Remote Data Collection provides you with the opportunity to do just that.

iMotions Remote Data Collection features both webcam-based eye tracking, and facial expression analysis. Researchers can simply send out a link to participants who are then taken through the experiment setup (with checks for ensuring that the lighting and head position are optimal), before beginning the actual experiment. The entire process for the participant takes place in the browser – from set up, to viewing and interacting with screen-based stimuli (be it videos, images, sound, websites, etc), to completing surveys.

The experiment is entirely configured through iMotions Core on the researcher’s side – all the researcher has to do is receive the data from the secure server. Then, it’s just a matter of conducting your analysis using Core + the Eye Tracking and/or Facial Expression Analysis Modules as you normally would, with their robust visualization, annotation, and analysis tools. Collecting eye tracking and facial expression data can therefore be carried out on multiple participants simultaneously, in any part of the world, with just an internet connection.

This allows researchers to open up and scale experiments with rapid deployment time. New pools of participants can be easily accessed and in a cost-effective way. Capturing emotional responses (with facial expression analysis using Affectiva or RealEyes) and attentional data (with webcam-based eye tracking) responses to on-screen stimuli can now be readily brought, en masse, to the participants you’re after.

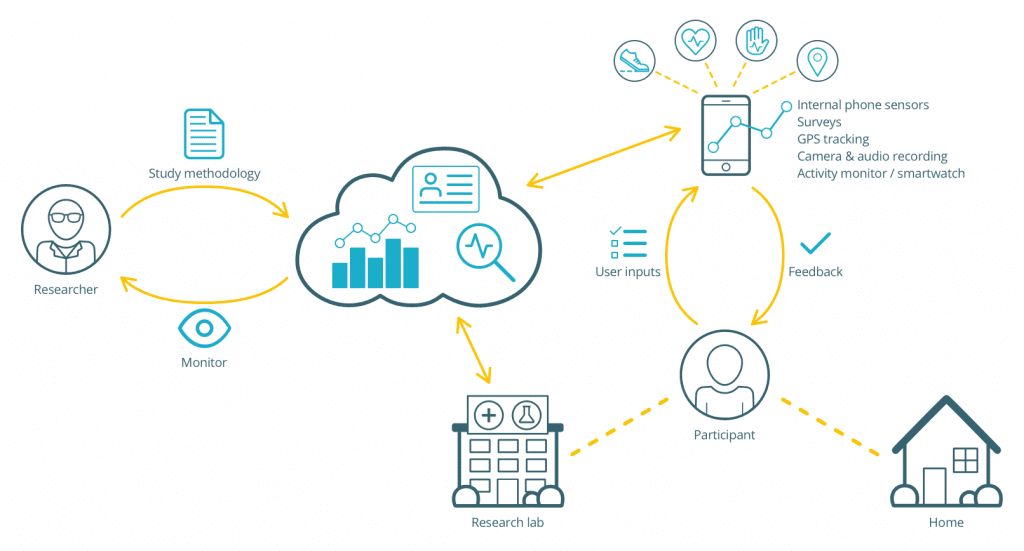

Mobile Research Platform

Our third step in the iMotions evolution, the iMotions Mobile Research Platform, also has a huge focus for us in 2021. The platform allows you to do research ‘in the wild’; in real-world settings to obtain ecological validity. It is built as a modular system that can integrate a variety of sensors built into your smartphone as well as wearables. The platform integrates wearable technology with sensors and data logs embedded in smartphones to capture the human experience as it’s happening, permitting a fine-grained, continuous collection of people’s behaviors. It’s also enhanced with the capability to embed and trigger various surveys and questionnaires that capture conscious feedback in the moment. We are currently collaborating with select clients on pilot studies using this platform, in which they’re helping us innovate on the product according to our clients’ broad application areas such as healthcare, longitudinal research, mobile and mobility studies, retail environments, and more.

iMotions Research Enablement

More than just Software

Advancing research within academia and industry is at the very core of the iMotions ethos. We want to help create a better understanding of human behavior, no matter how much you know about research already.

All iMotions customers have a dedicated Customer Success Manager, who is there for you with guidance and personalized support on using iMotions, and who runs your Onboarding training, which are individualized sessions that provide one-on-one contact to ensure research success at the start of your iMotions software purchase. We also offer specialized, intensive training with our iMotions Academy program, and we can tailor workshops and consultancy to your needs thanks to our Services and Product Specialist teams, giving you the expertise of experienced researchers (many of whom have PhDs), to tackle any challenges that may arise. Whatever you face with your research – we are there to help you solve it.

Our goal at iMotions has always been to expand and advance with the future of human behavior research. We have built upon our central software, which is used worldwide – in the field and in the lab – and have created new ways to study behavior remotely. The world of research has been changing, and we have been excited to change with it.