audEERING and iMotions collaborate to advance human behavioral research through voice analysis

- The world’s leading software provider of human behavior insights, iMotions, integrates voice analysis technology from German AI company, audEERING

- Collaboration of companies, both steeped in measuring, identifying, and analyzing human expression, to more accurately evaluate human behavior, emotion, and biomarkers

- A wide range of applications in science and industry underlines the importance of partnership

Copenhagen, 10.08.23 – audEERING, the EU market leader for AI-based audio analysis, and iMotions, the world’s leading software platform for studying the drivers behind human behaviour partner to expand and enhance human behaviour research and analysis capabilities. With the integrated Voice AI component, the multimodal software suite takes on another dimension that provides a deeper, more accurate and comprehensive understanding of human behavior.

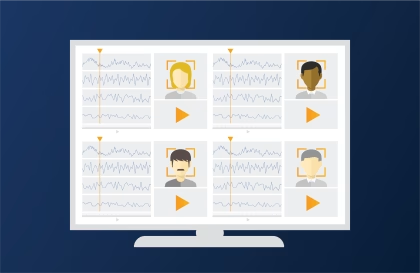

The integration of audEERING’s advanced AI-powered audio analysis technology, devAIce, into iMotions’ comprehensive biometric research platform allows researchers, market researchers and academic professionals to gain deeper insights into people’s underlying emotional responses and experiences. Through audEERING’s voice analysis technology, researchers can access their multidimensional emotion model to measure a voice’s valence, arousal and dominance. Valence refers to the positivity or negativity of an emotional expression, arousal to its energy level and dominance to its efficiency. The voice data can then be integrated into the iMotions platform and synchronized and analyzed alongside several other measures of nonconscious functions, including eye tracking, skin conductance, EEG, ECG, EMG and facial expression analysis. “The human voice is adding another dimension to understand human’s emotions that drive their behaviour and decisions. As a multimodal research tool, we want to incorporate this dimension to make voice-based markers accessible to researchers already working with signals collected from the eyes, face, or skin,” said Peter Hartzbech, CEO of iMotions. “The more of a person’s physiology you can monitor, the deeper insights you can derive and truly understand the reasoning behind that person’s behaviour or decision making.”

“As a company with an internationally renowned scientific background, it is important to us to continuously improve the quality of research methodologies,” said Dagmar Schuller, AI expert and CEO of audEERING. “iMotions is a world-renowned research platform and we are very pleased that they have chosen us as their voice AI partner. Together, we will make a significant contribution to improving scientific processes and usher in a new era for human behaviour analysis.”

audEERING’s AI technology is already in use outside scientific research: devAIce is being used in various private-sector sectors, for example in the development of empathetic robots for the care sector or the improvement of the customer experience when interacting with call centers. The combination of audEERING’s patented AI audio analysis algorithms with iMotions’ biometric measurements enables detailed collection of emotions in a wide range of application areas. From product development to advertising and market research to diagnostics support and improved monitoring and therapy in the clinical environment, the collaboration opens new possibilities to better understand and analyze human emotions.

About audEERING

audEERING was founded in 2012 as a spin-off of the Technical University of Munich and is a leading innovation driver in the fields of AI-based audio analysis. Through innovative machine learning methods such as deep learning, algorithms based on neural networks are developed and trained with promising methods such as unsupervised learning. audEERING’s patented technologies make it possible to use a variety of different models for automated audio analysis. In this way, human characteristics and conditions, health-relevant voice biomarkers, sound events, as well as acoustic environments and scenes are detected robustly and reliably in real time.

As an SDK as well as SaaS, the core product devAIce offers the possibility of recognizing speaker states and characteristics in full compliance with data protection regulations, whereby the dimension model used analyzes in particular the activation, valence and dominance, among other things. In addition, the recognition of age groups and gender is possible. Through the audEERING AI platform AI SoundLab, voice biomarkers can be reliably analyzed in connection with studies, research and concrete questions, as well as longitudinal considerations can lead to improved insights in the area of consumer and patient satisfaction and wellbeing.

audEERING’s customers include multinational corporations such as GN Group, iMotions, BMW, Daimler, Red Bull Media House, Deutsche Telekom and Ipsos. audEERING was awarded the VDE Award 2019, the VisionAward 2019 and the Innovation Award Bavaria 2018, among others, for its AI technology, as well as being named “Innovator of the Year” of the International Digital World Cup Series in 2017 and named “Vendor to Watch for AI” by Gartner, Inc. audEERING is scientifically internationally renowned and is involved as a consortium partner in a large number of research funding projects of the EU, BMBF, BMWK and EIT, including the projects SPEAKER (intelligent voice assistants), EcoWeb (depression), MARVEL (smart city), ERIK (autism), WorkingAge (smart working).

About iMotions

Founded in 2005 and headquartered in Copenhagen, iMotions, a SaaS company, has developed the world’s leading human behavior software platform. More than 1,300 organizations around the world – from leading academic institutions to global brands to highly respected healthcare organizations – use iMotions to access real-time and nonconscious emotional, cognitive and behavioral data. By integrating and synchronizing all types of sensors into a single platform, iMotions provides researchers with access to deeper and richer insights – and the most complete picture of human behavior. For more information, visit iMotions.com.