Break The Boundaries For Your Research

With the iMotions RDC (Remote Data Collection)

Platform, you can measure human behavior beyond the

lab-capturing meaningful data from participants

anywhere in the world. Even in remote settings, you can

uncover insights across three key behavioral categories:

- Arousal – Analyze physiological activation levels through webcam-based respiration and voice.

- Attention – Track where people look and for how long using webcam-based eye tracking.

- Emotions – Detect facial expressions and voice tone to understand emotional reactions.

Browser-based biometric data

One of the biggest advantages of remote research with

iMotions RDC Platform is its simplicity – all data collection is

done via a webcam and a microphone.

That’s it.

Using just these everyday tools, you can capture data

related to visual attention, emotional expressions, voice

tone, and even respiration patterns. This low barrier to

entry allows you to reach participants across the globe

while still collecting high-quality behavioral data.

How it Works

Modules Supporting Remote Data Collection

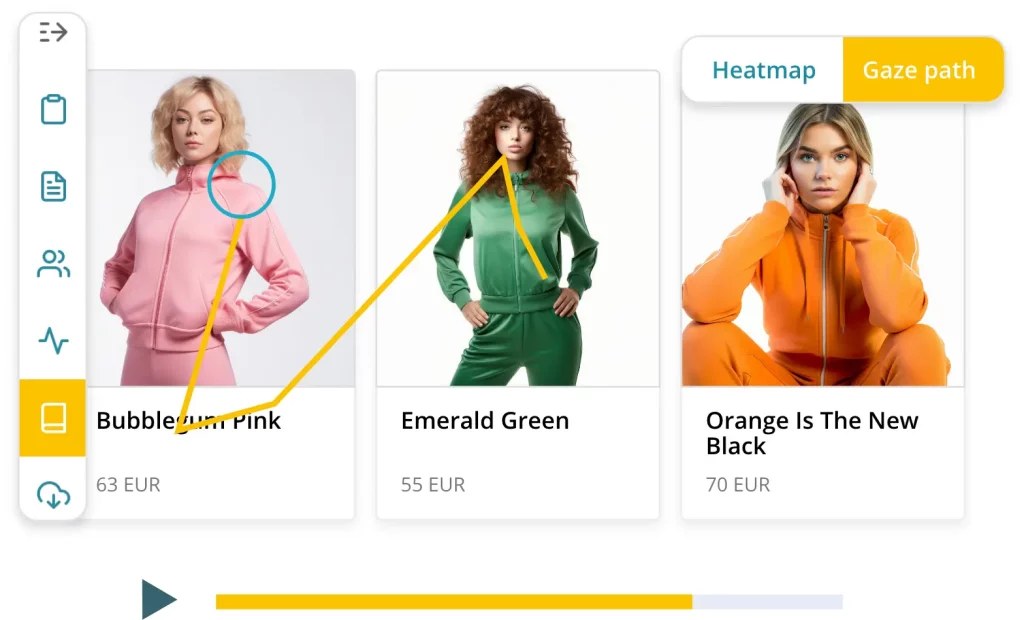

Webcam Eye Tracking

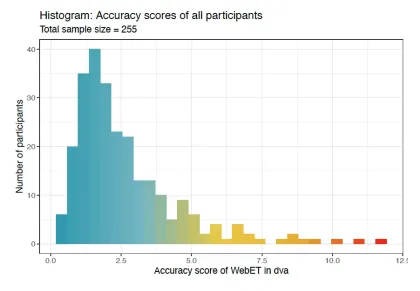

The Most Accurate Webcam Eye Tracking Available

Based on state-of-the-art technology iMotions WebET 3.0 is the most accurate and robust webcam eye tracking solution available on the market.

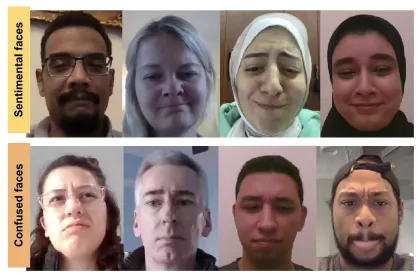

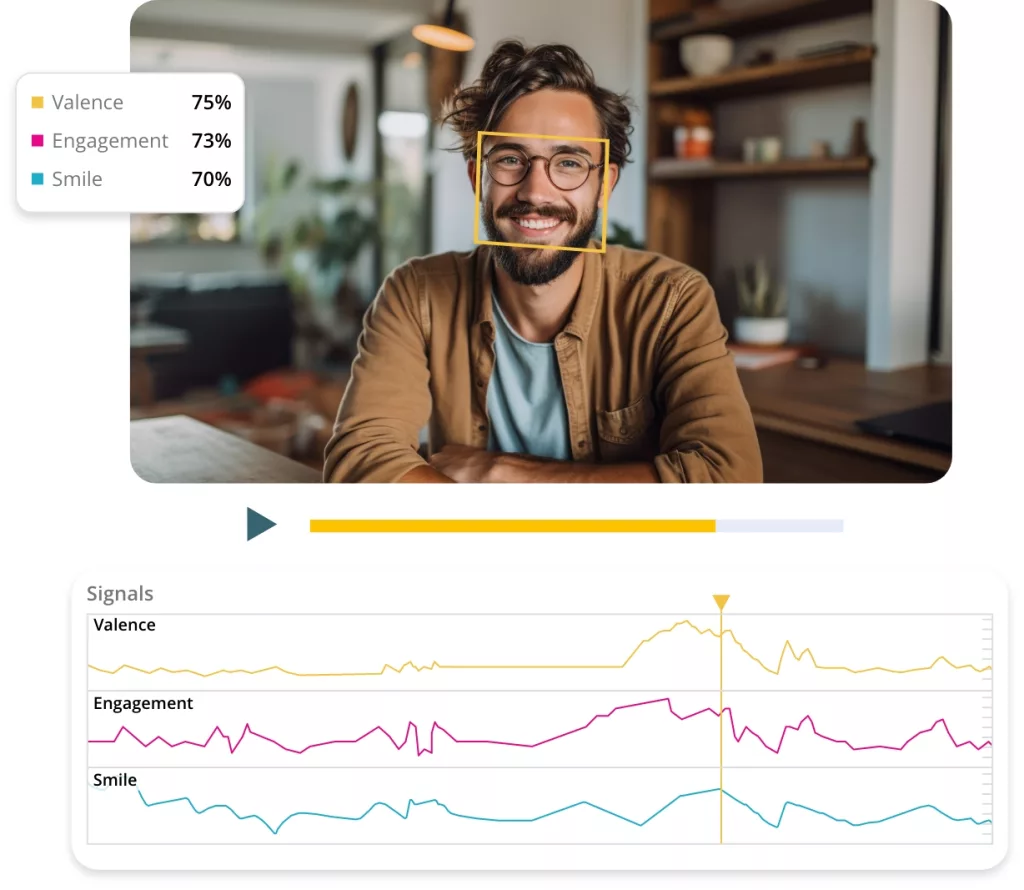

Facial Expression Analysis

Powered by Affectiva Facial Coding AI

Remote Data Collection supports all of the features of the Facial Expression Analysis module.

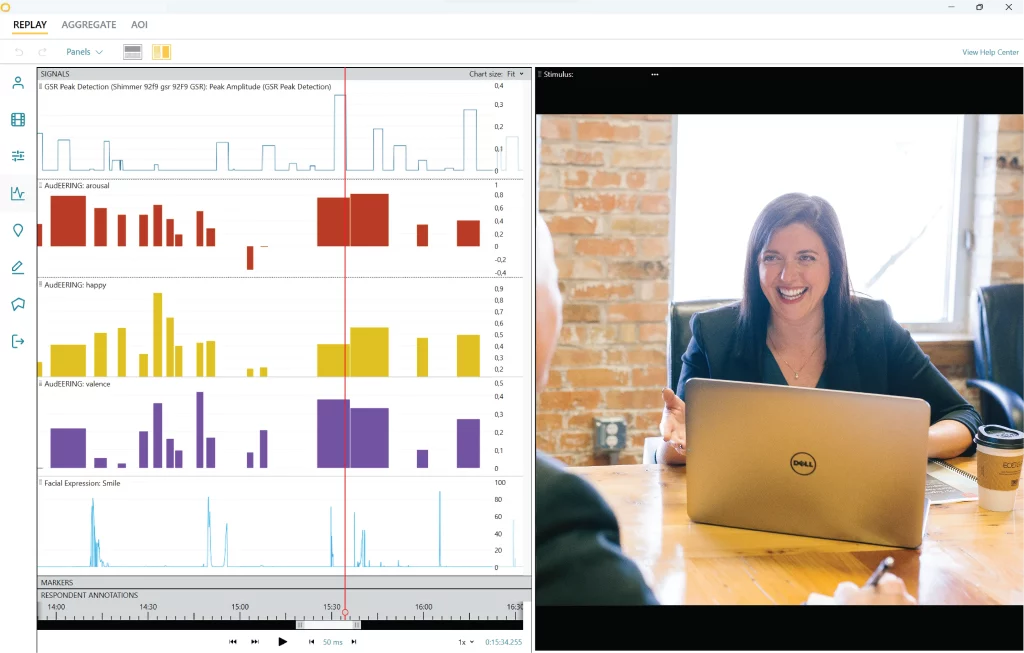

Voice Analysis

Powered by audEERING’s devAIce® AI models

Go beyond words by analyzing voices for both emotion and emotional valence. Explore fundamental voice features with metrics relating to prosody, including pitch, loudness, speaking rate, and intonation. Get data regarding perceived gender and age.

Find out more about the Voice Analysis Module.

Webcam Respiration

A Breakthrough in Scalable, Non-Invasive Respiration Monitoring

The iMotions Webcam Respiration Module sets a new standard in remote, non-invasive biometric data collection. With this module, researchers can monitor participant respiration accurately via a standard webcam, eliminating the need for specialized, contact-based hardware such as respiration belts, and thus eliminating the lab-bound logistics usually dictated by the technology.

Ready For Online Research?

Get in touch and book a demo today, so you can find out exactly how this can help solve your research goals.

Eye Tracking

Accuracy Threshold

Collect Data on

Desktop and Tablets

Most Advanced

Facial Coding AI

Supports

images/videos/websites

GDPR

Compliant

EU/USA

Third-party

Survey Support*

*Supports majority of third-party survey providers.

Stay up to date with the latest releases and features on my.imotions.com/#releasenotes

Features

Webcam Eye Tracking

Turn any webcam into an eye tracker and conduct studies using just a regular laptop

Facial expression analysis

Carry out facial expression analysis using Affectiva Facial Coding AI (AFFDEX).

Online Surveys

Remote Data Collection has its own advanced survey tool built in, supporting themes, videos, images, and logic. But you can also use a range of the most popular survey builders around through our third-party integration options

Remote Studies

Study management and data collection all happens via a cloud infrastructure, making it possible to deploy a study to anyone in the world with an internet connection

GDPR Compliant

With servers both in Germany and United States you can safely collect participant data without worrying about data security

Voice Analysis

The Remote Data Collection platform supports voice analysis using audEERING’s devAIce

Webcam Respiration

Webcam respiration is support for Remote Data Collection, and enables you to measure arousal to stimuli, all through a webcam