Discover how facial EMG captures subtle emotional responses by measuring electrical activity in facial muscles. More sensitive than webcam-based analysis, it detects even suppressed expressions and works in mobile or VR settings. Commonly used to assess emotional valence, fEMG supports research in psychology, healthcare, and human behavior where visible facial data is limited.

Table of Contents

Facial electromyography (facial EMG/fEMG) has been used for more than 30 years in both academic and commercial research to differentiate emotional expressions, and this tool is the perfect alternative to webcam-based facial expression analysis.

We often talk about Facial Expression Analysis as an important tool for measuring valence. However, there are many scenarios where webcam-based facial expressions cannot be collected.

Maybe it’s difficult getting a consistent video of your respondent’s face if they’re moving around in a real-life environment. Perhaps your respondents are using virtual reality, and you can’t collect facial expressions behind a VR headset. Or perhaps, you are looking for facial expressions that are so subtle, they cannot be visually observed via a webcam.

To help you understand what fEMG is, and when it’s best used, we’ll cover the background of fEMG, what it can do, and provide an overview of its role in research.

What is Facial Electromyography?

First, let’s discuss electromyography (EMG). In short, EMG is the measurement of the underlying electrical activity that’s generated when muscles contract (if you have some time, we have a great introduction on Electromyography 101 that we highly encourage you read).

When we generate a movement, an electrical action potential travels from the brain down the spinal cord, to specially-designated motor neurons. These motor neurons release acetylcholine into the neuromuscular junction, causing a release of calcium ions within the muscle. This calcium influx causes the sliding of motor filaments, actin and myosin, which shortens muscle cells and causes an overall muscle contraction.

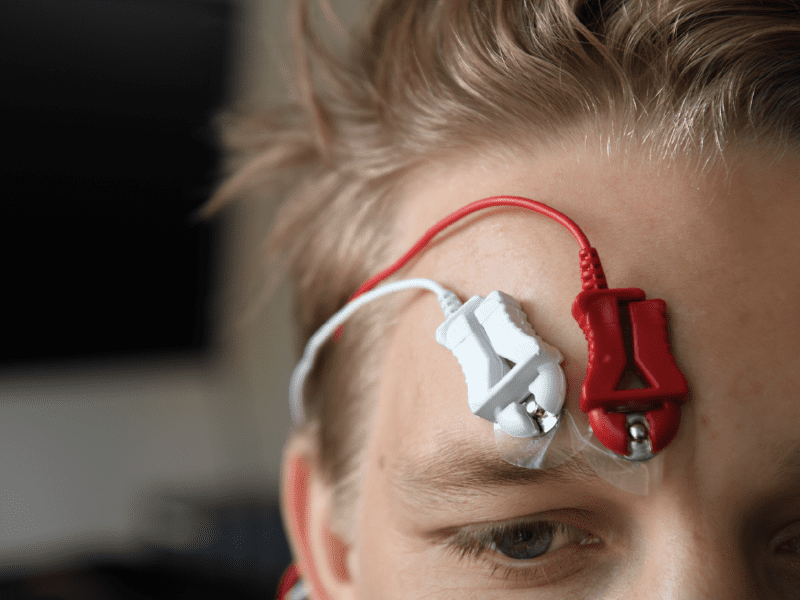

Similar to other passive recording tools like electrocardiography (ECG) or electroencephalography (EEG), we can observe this complex electrical activity by placing electrodes on the surface of the skin above the muscle fibers. By measuring this electrical activity, we can get a sense of when a muscle is activated, as well as the strength of that contraction.

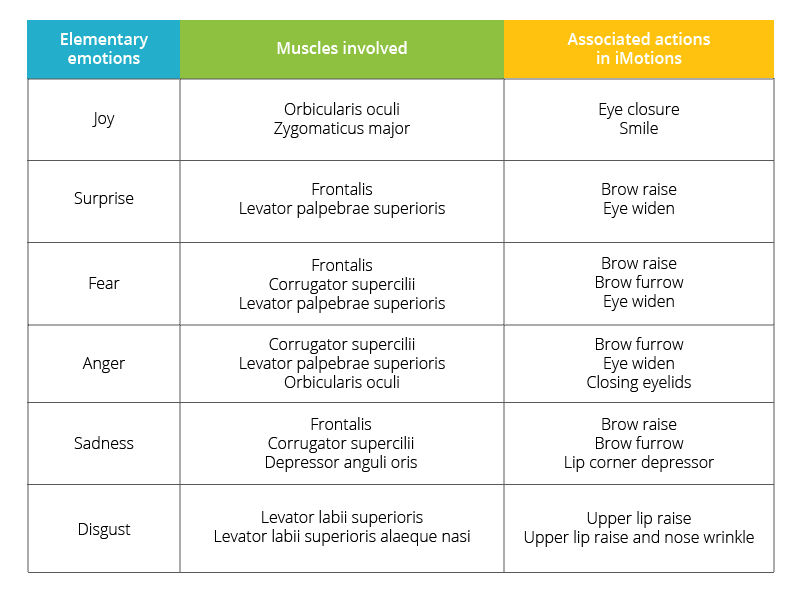

Facial EMG, specifically, pertains to electromyography that is specific to the muscles of the face. If you’ve worked with webcam-based facial expression analysis before, you’ll recall that webcam-based engines like Affectiva are based off of Ekman & Friesen’s Facial Action Coding System (FACS) [1], where facial expressions are divided up into Action Units representing individual movements of the face.

Facial EMG can also be considered within the FACS framework, as most Action Units described in FACS can be sourced from the activity of a single facial muscle. In fact, a very recent paper using iMotions showed that the output of Affectiva’s webcam-based facial expression engine correlates highly with muscle activation characterized by facial EMG [2].

What information does fEMG give you?

An Indication of Valence

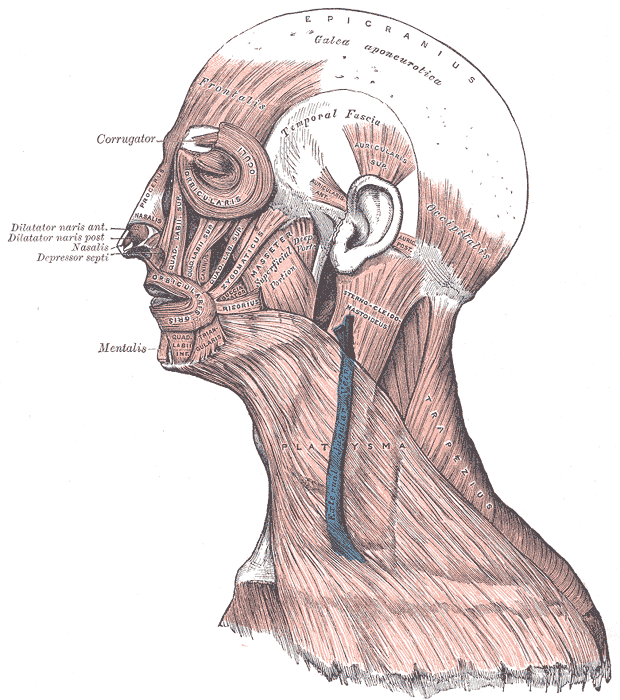

There are 43 muscles in the face [3, 4], most of which are controlled by the facial nerve, and many of them can be recorded using EMG depending on what your question is and what variables you’re interested in looking at. However, given the invasiveness and logistical difficulties of recording multiple muscles at once, two muscles are the most commonly studied: the zygomaticus major, responsible for smiling, and the corrugator supercilii, responsible for furrowing of the brow.

These two muscles have been the focus of a large body of research, since they can represent positive and negative valence respectively. Facial EMG studies have found that activity of the corrugator muscle corresponds strongly to negatively-valenced relactions and self-reported negative moods. Meanwhile, the zygomaticus muscle is positively associated with positive emotional stimuli and positive mood state [5, 6, 7, 8, 9].

Unparalleled Sensitivity

Facial EMG is a more sensitive measure of muscle activation than webcam-based analysis, since it picks up underlying electrical currents that wouldn’t be visible through a webcam [10].

One study even suggests that when respondents are explicitly instructed to inhibit their facial expressions, EMG is still capable of detecting minute changes in muscle activation despite conscious suppression [11].

Flexibility

The various hardware options available for EMG allow researchers to record facial expression data in circumstances when webcam-based measures are not possible. Bluetooth-enabled or wireless devices by Shimmer or BIOPAC can be used for mobile collections in real-life environments. The electrodes for EMG can also be placed easily under a VR headset.

The only downside to these hardware options is their restriction of the number of muscles you can record from. For example, the Shimmer EXG can record two muscles at a time. The BIOPAC wireless Bionomadix can also record two muscles simultaneously, but the wired EMG100C module only records from one.

Updates to fEMG 2023

Since we published this article in 2018, a lot has happened in the field of EMG and fEMG research and technology. Here are a few of the advancements that have happened in the last five years:

- High-Resolution Surface Electromyography (HR-sEMG): Recent studies have shown that HR-sEMG is highly effective in distinguishing between different facial movements and emotions. This method uses a multi-channel approach for recording, providing more detailed and accurate muscle activity patterns. A study demonstrated that HR-sEMG can reliably differentiate between the six basic emotions with very low interindividual variability. The Kuramoto scheme, a landmark-oriented electrode arrangement, was found to be more effective than the Fridlund scheme, particularly for the lower face. This challenges the traditional focus on the upper face in psychological experiments. Additionally, the study highlighted the limitations of HR-sEMG in settings with spontaneously displayed expressions, noting the need for a wireless solution that can cover the entire face. [12]

- Flexible Noninvasive Electrodes (FNEs) for sEMG: The development of FNEs has been significant in improving the quality of sEMG signals. These electrodes offer better signal-to-noise ratio, flexibility, biocompatibility, and adhesion to skin, which is crucial for long-term recording. FNEs are designed to match the mechanical properties of human skin, thus minimizing discomfort and irritation during measurement. They are also being developed with breathability in mind to prevent issues like skin irritation and motion artifacts caused by sweat accumulation. [13]

- Wireless EMG Technology: A recent study proposed a portable wireless transmission system for the multi-channel acquisition of EMG signals. This system uses electrodes placed at the periphery of the face to reduce inhibition of facial muscle movement. It employs Wi-Fi technology for increased flexibility and has been shown to effectively recognize various facial movements

Where is facial EMG commonly used?

Facial EMG has a wide variety of uses in the medical field, particularly in movement and rehabilitation research pertaining to neuromuscular diseases like ALS, Parkinson’s disease and stroke. Facial EMG has also been used in emotion studies with individuals with Autism.

More recently, facial EMG has increasing uses in market research, gaming and VR. Facial EMG is also commonly used in web usability studies, market research and human factors research, as they provide sensitive measures of valence that might not be picked up using webcam-based methods.

While your exact research question will determine the usefulness of incorporating fEMG into your work or research, it offers the possibility of unparalleled insights into emotional expressions. If you’d like to learn more about facial expression analysis in general, download our free guide below.

Free 42-page Facial Expression Analysis Guide

For Beginners and Intermediates

- Get a thorough understanding of all aspects

- Valuable facial expression analysis insights

- Learn how to take your research to the next level

References

- Ekman, P. and Friesen, W. (1978). Facial Action Coding System: A Technique for the Measurement of Facial Movement. Consulting Psychologists Press, Palo Alto.

- Kulke, L., Feyerabend D., and Schacht, A. (2018). Comparing the Affectiva iMotions Facial Expression Analysis Software with EMG. https://doi.org/10.31234/osf.io/6c58y

- Marur T., Tuna Y., Demirci S. (2014). Facial anatomy. Clin. Dermatol. 32, 14–23. 10.1016/j.clindermatol.2013.05.022

- von Arx, T., Nakashima, M. J., Lozanoff, S. (2018). The Face – A Musculoskeletal Perspective. A literature review. Swiss Dent J. 128, 678–688.

- Cacioppo, J. T., Petty, R. E., Kao, C. E, & Rodriguez, R. (1986). Central and peripheral routes to persuasion: An individual difference perspective. Journal of Personality and Social Psychology, 51, 1032-1043.

- Dimberg U. (1990). Perceived unpleasantness and facial reactions to auditory stimuli. Scand. J. Psychol. 31:70–75.

- Lang P. J., Greenwald M. K., Bradley M. M., Hamm A. O. (1993). Looking at pictures: affective, facial, visceral, and behavioral reactions. Psychophysiology, 30 261–273. 10.1111/j.1469-8986.1993.tb03352.x

- Schwartz, G. E., Ahern, G. L., and Brown, S. (1979). Lateralized facial muscle response to positive and negative emotional stimuli. Psychophysiology, 16: 561-571.

- Sirota, A. D., & Schwartz, G. E. ~1982!. Facial muscle patterning and lateralization during elation and depression imagery. Journal of Abnormal Psychology, 91, 25–34.

- Cacioppo J. T., Petty R. E., Losch M. E., Kim H. S. (1986). Electromyographic activity over facial muscle regions can differentiate the valence and intensity of affective reactions. J. Pers. Soc. Psychol. 50 260–268. 10.1037/0022-3514.50.2.260.

- Cacioppo, J. T., Bush, L. K., & Tassinary, L. G. (1992). Microexpressive facial actions as a function of affective stimuli: Replication and extension. Personality and Social Psychology Bulletin, 18, 515-526.

- Guntinas-Lichius, O., Trentzsch, V., Mueller, N. et al. High-resolution surface electromyographic activities of facial muscles during the six basic emotional expressions in healthy adults: a prospective observational study. Sci Rep 13, 19214 (2023). https://doi.org/10.1038/s41598-023-45779-9

- Cheng, L., Li, J., Guo, A. et al. Recent advances in flexible noninvasive electrodes for surface electromyography acquisition. npj Flex Electron 7, 39 (2023). https://doi.org/10.1038/s41528-023-00273-0

![Featured image for How to Set Up a Cutting-Edge Research Lab [Steps and Examples]](https://imotions.com/wp-content/uploads/2023/01/playing-chess-against-robot-300x168.webp)