Date: December 2022

Conference: 10th Asian Symposium on Process Systems Engineering

Authors: Divya Seernani, Pernille Bülow, Jessica M. Wilson

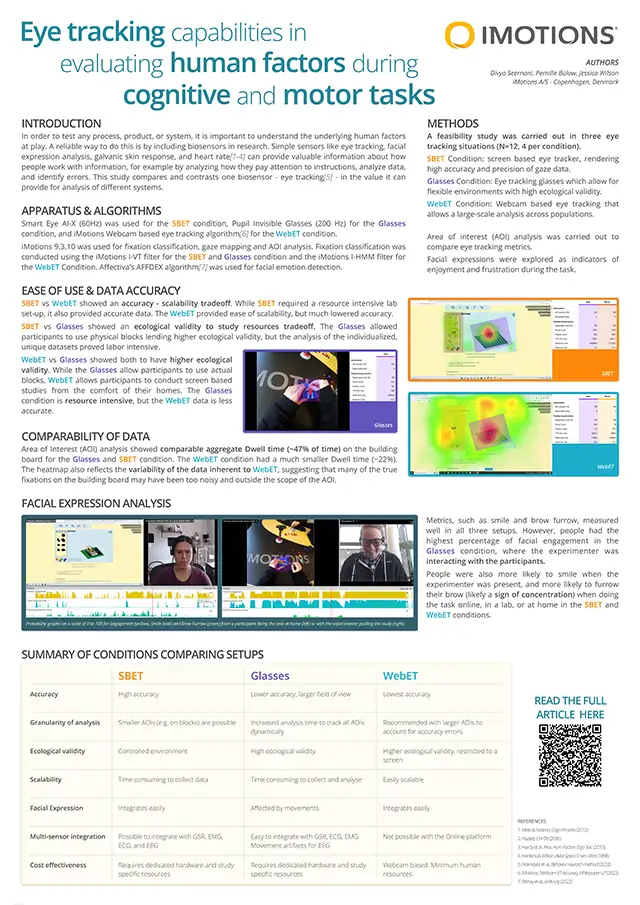

Background: In order to test any process, product or system, it is important to understand the underlying human factors at play. A strong way to do this is through biosensor research. Simple sensors like those measuring eye-tracking, facial expression analysis, galvanic skin response, and heart rate can provide valuable information about how people work with information in front of them, for example by analysing how they pay attention to instructions, analyse data and identify errors. Methods: We test one such biosensor – eye tracking – in the value it can provide for the analysis of different systems, and the strength of combining it with other sensors like facial expression analysis. We use an easily transferable task of building blocks in three different eye-tracking situations (N=12, 4 per condition) in a feasibility study. 1. A screen based eye-tracker that renders high accuracy and precision of where someone is looking. 2. Eye-tracking glasses that allow for gross parameters in flexible environments with high ecological validity, and 3. A Webcam based eye-tracking that allows a large-scale analysis across populations. Eyetracking metrics such as fixation duration and revisit count were used to understand task comprehension and provide insights into the process of building. Facial expressions such a brow furrow, anger and confusion were explored as indicators of frustration during the building process. Results:Results show that each eye-tracking method has unique strengths when performing studies to capture the human factors involved with building blocks. Most prominently, a lack of attention to instructions was correlated to performance on task, and revisits to the various elements of the task (instructions, blocks left, blocks build) were associated with better comprehension. Facial expression analysis was a good supplement to understanding frustration levels throughout the process. Scanpaths from all three conditions have been compared, with special notes on feasibility-accuracy trade-offs. While the screen-based eye-tracker was the most accurate, it may lack ecological validity in some conditions, such as those involving physical blocks or cooperation with other teammates. The eye-tracking glasses were found to be the most ecologically valid, but design and analysis needs to be streamlined for optimal results. Finally, the webcam eye-tracking, although the least accurate, can make up for its deficits in ease of scalability and benefits from the analysis of screen-based studies. Understanding the above-mentioned strengths and trade-offs will help researchers pick the optimal tool for their specific research questions and setup. Lab based studies have been contrasted with field based and a digital, online solution. Caveats for each kind of research design have been discussed. We conclude that each eye tracker provides unique strengths for evaluation of human factors during a cognitive and physical task.