Eye tracking research is rapidly expanding across retail, automotive, healthcare, and UX design. Growth is driven by mobile and remote eye tracking, often combined with biosensors like EEG and GSR to better understand attention and behavior. These methods are shaping more realistic, multimodal human-centered research.

Table of Contents

2020 has come and gone, and although it was a challenging year, we look optimistically at the future and the opportunities that lay ahead for biosensor research. While the software is of course the most integral part of any research setup with iMotions, there can be no biosensor research without the biosensors. So, in this blog we break down the latest trends in our hardware partners industries, look at novel research in the modalities, and how iMotions views the latest trends in human behavior research.

iMotions aims to be at the forefront of knowledge sharing, promoting, and enabling biosensor research to help it to reach new heights. Here, we highlight trends of the main hardware modalities used to gather a holistic understanding of the emotional human experience. The first modality we will be looking at is Eye Tracking.

Trends in Mobile Eye Tracking

Eye tracking has been the most accessible hardware for some time now, and its visibility in the market is steadily increasing. More and more researchers are sharing data sets, methodologies, and collaborating to improve data, and standardizing eye tracking techniques.

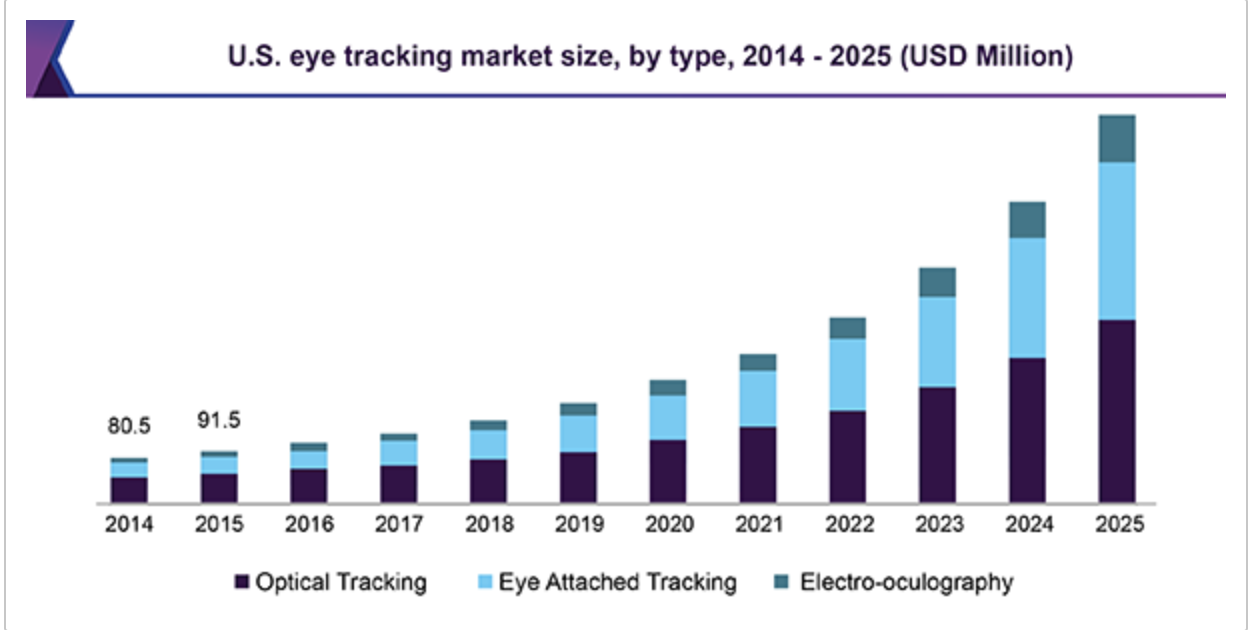

The global eye tracking market is expected to grow from USD 368 million in 2020 to USD 1.75 billion by 2025; it is expected to grow at a CAGR of 26.3% during the forecast period. (Grandviewresearch)

The largest area of growth in regards to eye tracking through 2020 was in consumer behavior research, primarily in mobile eye tracking with the modality seeing increasing demand in the retail sphere, specifically in the FMCG sector. This growth means that conducting studies with eye tracking glasses in retail environments like shops is becoming more mainstream and is no longer seen as ahead of the curve. (Marketsandmarkets.com).

At iMotions, we are excited to note that eye tracking software is expected to grow as well, especially for consumer behavior testing and product R&D to better understand consumer preferences. (ResearchandMarkets).

Learn more about How to do Product testing: In-Store Shelf Testing

Eye tracking in automotive and transportation environments is also predicted to see huge growth, primarily within driver monitoring systems. Eye tracking has been confirmed to be an effective technology for detecting drowsy or distracted driving, as automotive researchers and companies alike conduct testing and integration of eye tracking methodologies in driving environments — real or simulated. Training within the automotive and aviation sectors also contributes to this growth, as eye tracking is more heavily adopted in VR / AR training environments like aviation- or driving simulators(ResearchandMarkets).

Learn more: What is VR Eye Tracking? [And How Does it Work?]

Eye Tracking Glasses

Compare the best in market

- Compare the specifications of each hardware

- Learn more about eye-tracking metrics

- Finding the right equipment for

your research

Trends in Remote Eye Tracking

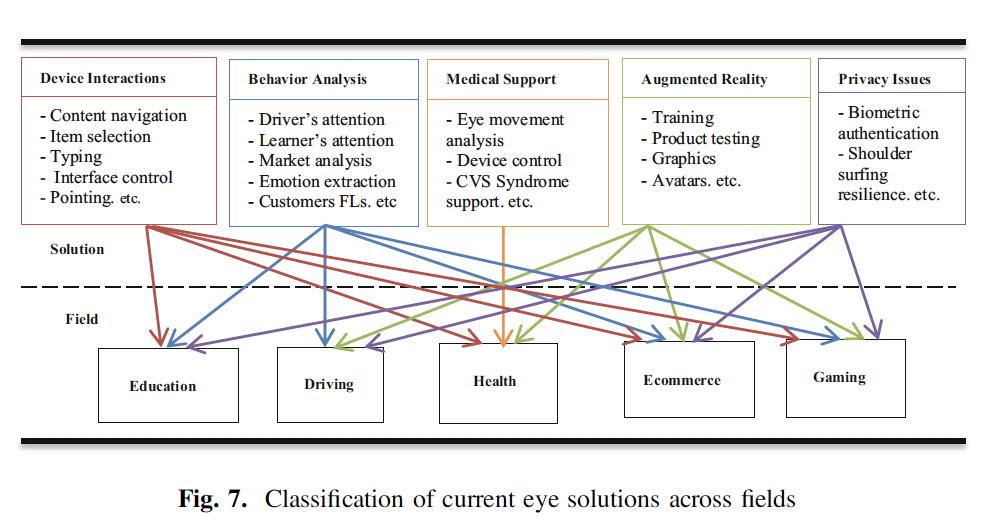

Screen-based eye tracking research is being applied in a larger range of applications in the academic and commercial spheres across the following fields:

- Education

- Driving & Performance training

- Health

- eCommerce

- Gaming

Research done with eye tracking gives an immediate insight into user behavior, and measurements of visual attention and what people are noticing and engaging with on screens or in the world is an invaluable resource. This is often a huge advantage in making improvements in device interactions, behavior analysis, medical support, and gameplay design — all collected under the umbrella term of human-centered design.

Source: Advances in Intelligent Systems and Computing (Book) – Shehu, I. Wang, Y. Athuman, A. Fu, X. 2020, “Paradigm Shift in Remote Eye Gaze Tracking Research: Highlights on Past and Recent Progress” Springer Press, Switzerland, Pp: 171. (Article)

Source: Advances in Intelligent Systems and Computing (Book) – Shehu, I. Wang, Y. Athuman, A. Fu, X. 2020, “Paradigm Shift in Remote Eye Gaze Tracking Research: Highlights on Past and Recent Progress” Springer Press, Switzerland, Pp: 171. (Article)

The above infographic highlights the emerging trend of combining eye tracking hardware with other modalities such as EEG, Facial Expression Analysis and GSR, into the framework of the aforementioned fields to optimize designs thanks to a more complete understanding of human behavior. These emerging solutions are shown mixed within already established spheres of usage and research. For example, behavior analysis in automotive scenarios is primed for multi-modal research such as measuring driver attention by adding to well-established eye tracking practices with facial emotion detection in smart cars. Telehealth, Elearning, and eCommerce have also accelerated in the past year, leading to a greater need for integrated analyses of human-computer interaction.

The expanding of existing research fields such as the Internet of Things (IoT), automotive safety, autonomous vehicles, medical devices, and product- and web-based UX with new and innovative approaches to research and methodologies gives us an indication of what eye tracking research will move towards this year and in the near future.

It is worth keeping in mind that an overwhelming majority of both eye tracking hardware and software is developed solely for research and testing of human-centered design. This indicates that, with the global investments currently being made in the field, the emerging trends we see today are poised to grow substantially over the coming years.

See all iMotions powered research with Eye Tracking

Novel Research in Eye Tracking

As cited above, eye tracking has been heavily used in advertising and consumer preference but is now becoming more popular in computer-human interaction, usability, healthcare, and automotive/transportation, leading to innovative multimodal research within these disciplines.

Check out: Advanced UX and Usability Methods

Recently a research study conducted on an e-learning platform showed that a heuristic approach to UX testing, when coupled with eye tracking, gives researchers a more robust set of tools to identify and evaluate usability issues than relying on a single testing method. The study determined that combining heuristic evaluations with eye tracking and usability testing has the capacity to help researchers identify more user issues within e-learning portals, especially when the issues are complex. The researchers recommend that this type of evaluation methodology can be used successfully to improve usability and therefore achieve maximum adoption of e-learning platforms and similar web portals.

More promising research with eye tracking is in studying autonomous and distracted driving. The University of Michigan used eye tracking, heart rate, and GSR psychophysiological measures in a simulated driver environment to help predict the takeover time and reaction when there is a need to take control of a vehicle from an autonomous driving state to a manual one. . The participants had to unexpectedly take over in different urban driving scenarios while doing a visual memory task at other times. Impressively, the researchers had over 70% accuracy to predict drivers’ takeover performance using their physiological data.

Eye tracking in healthcare studies goes beyond studying medical device interaction and can even be applied to assessing visual preference among populations with Autism Spectrum Disorder (ASD). A recent study with iMotions client Janssen Research and Development presented videos of representing biological and non-biological motion to participants with ASD and compared their eye movements against a typically developing (TD) control group. They found that the “participants with ASD spent less overall time looking at presented stimuli than TD participants (P < 10–3), showed less preference for biological motion” and were also later to fixate on the motion, suggesting that individuals with ASD have differing eye movement patterns and motion preferences than other populations.

Learn more about Automotive Research

If you’re interested in staying up to date with the latest in Eye tracking technology from iMotions sign up for our newsletter.