Facial expressions play a key role in interpersonal communication when it comes to negotiating our emotions and intentions, as well as interpreting those of others. Research has shown that we can connect to other people better when we exhibit signs of empathy and facial mimicry. However, the relationship between empathy and facial mimicry is still debated. Among the factors contributing to the difference in results across existing studies is the use of different instruments for measuring both empathy and facial mimicry, as well as often ignoring the differences across various demographic groups. This study first looks at the differences in empathetic abilities of people across different demographic groups based on gender, ethnicity and age. The empathetic ability is measured based on the Empathy Quotient capturing a balanced representation of both emotional and cognitive empathy. Using statistical and machine learning methods, the study then investigates the correlation between the empathetic ability and facial mimicry of subjects in response to images portraying different emotions displayed on a computer screen. Unlike the existing studies measuring facial mimicry using electromyography, this study employs a technology detecting facial expressions based on video capture and deep learning. This choice was made in the context of increased online communication during and post the COVID-19 pandemic. The results of this study confirm the previously reported difference in the empathetic ability between females and males. However, no significant difference in the empathetic ability was found across different age and ethnic groups. Furthermore, no strong correlation was found between empathy and facial reactions to faces portraying different emotions shown on a computer screen. Overall, the results of this study can be used to inform the design of online communication technologies and tools for training empathy team leaders, educators, social, and health care providers.

Related Posts

-

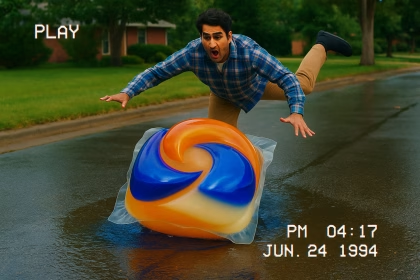

More Likes, More Tide? Insights into Award-winning Advertising with Affectiva’s Facial Coding

Consumer Insights

-

Why Dial Testing Alone Isn’t Enough in Media Testing — How to Build on It for Better Results

Consumer Insights

-

The Power of Emotional Engagement: Entertainment Content Testing with Affectiva’s Facial Expression Analysis

Consumer Insights

-

Tracking Emotional Engagement in Audience Measurement is Critical for Industry Success

Consumer Insights