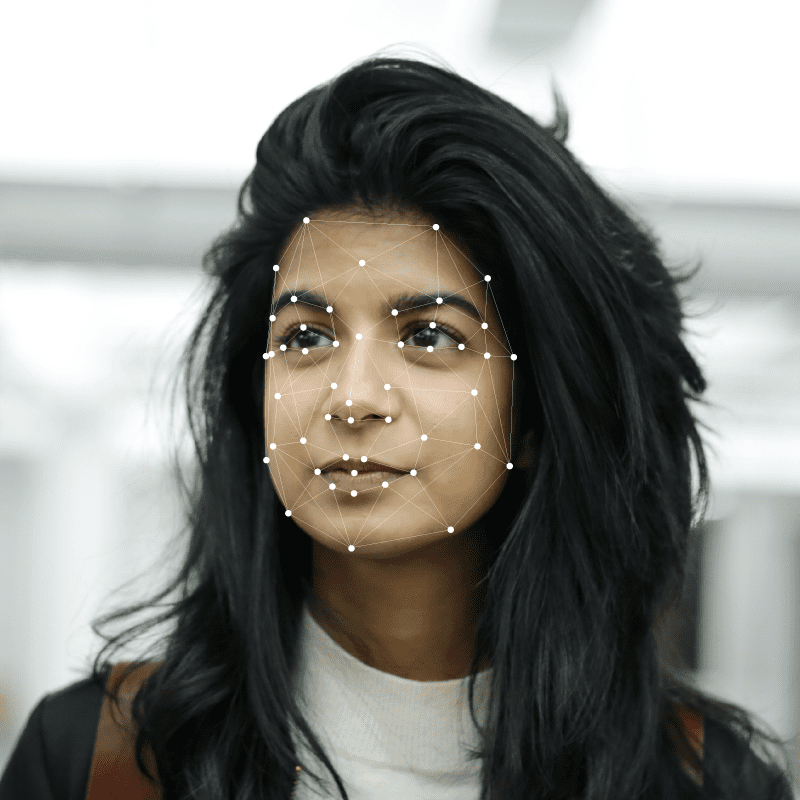

Facial analysis technologies use certain facial landmarks such as pupils, eye corners, and lip boundaries to make inferences about someone’s emotional state, age, gender, underlying medical conditions, or any other relevant information. The information given about someone’s emotional state can then translate into further behavioral insights.

Table of Contents

We scan faces around us all the time. When we meet someone, our built-in perception mechanisms work below our awareness not just to recognize a familiar face but to also detect their emotional state and try to identify the underlying intentions. When we look at someone, our eyes extract different information about them, such as an unfriendly stare, a raised brow, or an inviting smile. All these stimuli help us respond appropriately to the person in front of us, such as moving away from an unfriendly stranger or leaning closer to a trusted friend. Thanks to new technology, facial analysis is now possible with machine learning and computer vision techniques.

What is Facial Analysis?

Face analytics, also known as facial analysis, is the process of detecting and interpreting cues from someone’s face using technology. Through facial analysis, technological tools and software detect faces in images or videos and extract meaningful information from them about a person. The information gathered from face analytics technologies is then used to respond to human behavior in real-time or make predictions about their gender, health, and emotional state.

Benefits of Facial Features Analysis

The face is the most complex nonverbal system in the body, so having access to its information is a major advancement in many industries, helping them learn key information about people’s emotional reactions and preferences. For example, face analytics tools benefit various domains, having positive implications in business, marketing, and education.

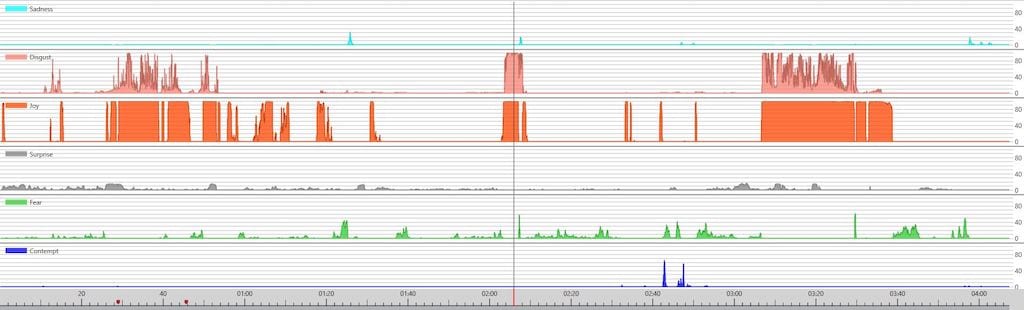

Accurate emotion analysis and sentiment detection

The emotions we show can tell us a lot about our reaction to a given stimulus. Being able to accurately detect what someone is feeling in real time can help someone respond more accurately to the non-verbal feedback provided by that person. For example, businesses that rely on customer feedback will find it very useful to have immediate information about the client’s reaction to a product, advertisement, or service. As a result, they will be able to improve and optimize products or marketing strategies based on the client’s emotional reaction to them.

Data-driven decision-making

Human decision-making results from a complex interplay of emotions, situational contexts, and cognitive appraisal. Having more information about someone’s unique details such as gender, age, and emotional state results in decision-making more tailored to the person. In a study on identity and emotion-based decision-making, both personal identity and emotional expressions made significant contributions to social decision-making processes. Besides, ignoring the other person’s emotions interfered with decisions based on identity. This means that even if you have information about someone’s identity, ignoring their context-based emotions might lead to less optimal decision-making. In an ideal world, decisions are made by combining information about someone, as well as how they’re feeling or reacting in the moment.

Real-time monitoring of facial expressions

There are many cases in which people’s verbal feedback is different from their real emotional expression. Sometimes, people say one thing, but their non-verbal language indicates that they might actually be feeling the opposite. While in many contexts this would not have any consequences, in some industries, it could lead to missing out on a lot of valuable information about someone. For example, businesses in the retail industry can have a lot more access to a customer’s emotional reaction to different products with face analysis technology. They will be able to better understand what the customer responds to in a positive, negative, or neutral way: what they enjoy, what they dislike, and what leaves them with no emotional reaction. Real-time monitoring of facial expressions leads to a better understanding of customer feedback, leading to better decision-making.

iMotions’ Facial Analysis Online Software

Human behavioral tools are drastically changing the way we understand people. What was once a subtle non-verbal cue or a simple gesture is now a way into a customer’s mind. Regardless of whether you’re working with people in a research, commercial, technology, or healthcare setting, having advanced tools to obtain key information about them will make a tremendous change in your work.

At iMotions, we offer a range of tools to leverage the insights offered by eye tracking and facial expressions so you can better understand the people you interact with. Our latest facial analysis software will help you collect data about your customer’s nuanced emotions so you can make decisions more aligned with them. You can reap the benefits of this emotion analysis technology with our iMotion face analytics software and add a deeper layer of understanding of your customers.

Free 42-page Facial Expression Analysis Guide

For Beginners and Intermediates

- Get a thorough understanding of all aspects

- Valuable facial expression analysis insights

- Learn how to take your research to the next level