Compare Affectiva’s AFFDEX 2.0 with leading open-source facial coding tools. This study shows superior accuracy, face detection, and feature coverage across 10.5M frames, highlighting why commercial AI outperforms free alternatives for scalable, real-world emotion detection and research applications.

Table of Contents

Introduction

The world of AI and emotion detection technologies have been gaining momentum for some time, and researchers and developers are often faced with a choice. They can choose to rely on open-source, free toolkits or invest in a commercial-grade solution to analyze data and build actionable insights.

While free tools are appealing in their overall accessibility, the ultimate question is whether they hold up when put to the test on large-scale, real-world datasets. In this blog, we delve deeper into the findings of a comprehensive internal study our team has made against some of the most popular free toolkits available online.

AFFDEX vs Open Source White Paper

In our latest whitepaper, Benchmarking Facial Action Coding at Scale: AFFDEX 2.0 vs. Open-Source Toolkits, we demonstrate that when it comes to accuracy, coverage and stability on real-world face video data capture conducted in different recording conditions, Affectiva’s facial expression analysis tech emerges as the definitive choice for research and applications focusing on emotion detection and evaluation. In this blog, we will highlight some of the key findings of our comparison analysis.

But first, a quick refresher of our offerings and AFFDEX:

At iMotions, the Affectiva technology sits in a variety of product offerings including:

- iMotions Facial Expression Analysis Module

- Affectiva Media Analytics for advertisement and entertainment content testing

- Affectiva Facial Coding API

- Affectiva Facial Coding SDK

All these offerings are powered by AFFDEX 2.0, Affectiva’s current core Facial Coding AI engine. Our Science team actively works to innovate and improve our Affectiva Facial Coding AI algorithms; we routinely publish our work as we strongly believe in transparency in our technology and academic rigor in what we build and provide to our partners.

Our most recent publication on AFFDEX 2.0 goes into more detail about how our technology works and introduces two emotional states of Sentimentality and Confusion that are unique to Affectiva. And to further understand what facial expressions are tied to our emotional state outputs, we also have a AI-Driven Emotion Recognition 101 blog that goes into more detail, and a comprehensive guide into how to use facial expression analysis in iMotions.

Methods: designing our evaluation of AFFDEX 2.0 and open-source toolkits

We conducted our analysis reviewing AFFDEX 2.0 and some of the popular free solutions that are available on the market, including OpenFace 2.0 and LibreFace. The goal of this assessment was to understand how all these solutions stack up in terms of reliability, face coverage and accuracy in detecting facial expressions.

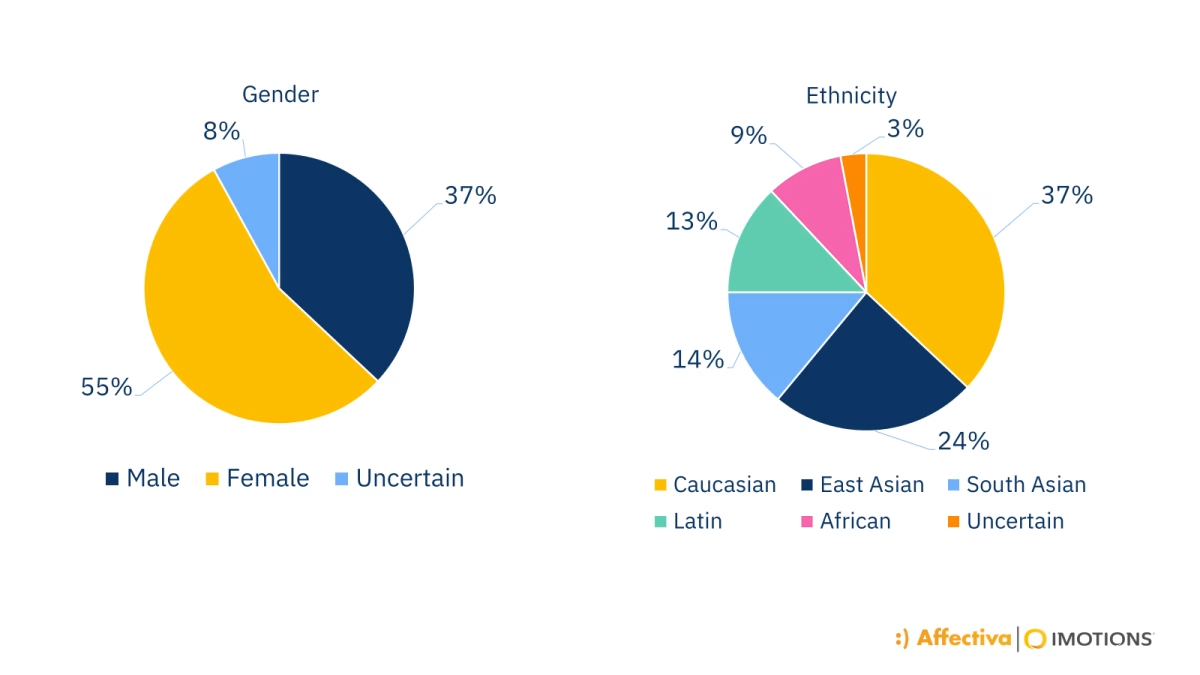

To ensure a fair comparison across the toolkits, we tested these tools on a subset of our database consisting of over 7,800 face videos and approximately 10.5 million frames of data, spanning across different genders and ethnicities (see below for gender and ethnicity distributions).

Gender and ethnicity distribution of face videos from Affectiva’s database that was used in this analysis

To align the outputs from all three toolkits, our science team calculated balanced accuracy, and we also computed ROC-AUC (Receiver Operating Characteristic – Area Under the Curve), a measure of diagnostic ability in determining the detection of an action unit or facial expression) by treating the continuous intensity labels as confidence scores to align OpenFace and LibreFace estimates to AFFDEX.

Key Finding #1: Open source options do not offer a comprehensive facial expression feature set compared to AFFDEX 2.0.

When conducting the analysis, it was identified that open source options are unable to match the AFFDEX 2.0 feature set. AFFDEX 2.0 offers a more comprehensive feature set when it comes to facial expression action units (also referred to as AU):

- AFFDEX 2.0 detects 20 AUs across the whole face, giving researchers a richer, more granular understanding of emotion and expression.

- OpenFace 2.0 is noted to be able to provide presence predictions for 18 AUs and intensity estimations for 17 AUs.

- And lastly, LibreFace can provide estimates on 11 AUs and intensity estimations on 12 AUs.

Based on these initial findings, we focused on a simpler subset of our outputs (e.g., Smile, Brow Furrow, Nose Wrinkle etc.) to evaluate accuracy rates, which can be found in our whitepaper.

And thinking beyond facial expressions: the insights offered by AFFDEX offers a comprehensive suite of signals that also include facial landmarks, head pose, AUs and high-level emotional states (e.g., Joy, Sadness, Surprise etc.).

Key Finding #2: Not all open source toolkits can successfully detect faces in naturalistic environments.

While the majority of the testing videos we had featured front-facing subjects looking towards the camera, from our analysis, we saw that face detection rates varied significantly across toolkits.

Of note:

- AFFDEX 2.0 and OpenFace 2.0 successfully detected faces in approximately 95% of all tested frames from our dataset.

- In comparison, LibreFace achieved an overall 83% detection rate.

These findings demonstrate a notably stronger face detection performance of AFFDEX 2.0 and OpenFace 2.0 compared to LibreFace when it comes to controlled datasets. When considering a solution, it is important to also consider how you plan on implementing data collection (e.g., in a lab environment versus remote, in a car or simulator versus sitting in front of a computer), and what will best fit your research needs.

Key Finding #3: Open source toolkits do not possess high accuracy rates when it comes to detecting facial expressions.

We found that in our evaluation of all three tools, AFFDEX 2.0 demonstrates superior robustness across nearly every AU. Given the differences in face detection rates among AFFDEX 2.0, OpenFace 2.0 and LibreFace, we only looked at intersecting frames where all three were able to detect the presence of the face for the accuracy analysis.

When comparing AFFDEX 2.0 to OpenFace 2.0:

- AFFDEX 2.0 achieved a notable 8.5 percentage point lead in average balanced accuracy (0.753 for AFFDEX vs. 0.668 for OpenFace) and a substantial margin in average ROC-AUC (0.907 vs. 0.721).

And when comparing AFFDEX 2.0 and LibreFace, we found a markedly pronounced difference between the two toolkits in that:

- AFFDEX 2.0 outperformed LibreFace by 12.9 percentage points in balanced accuracy (0.753 for AFFDEX vs. 0.624 for LibreFace) and again in AUC-ROC (0.907 vs. 0.677).

These findings indicate that while open-source toolkits such as LibreFace or OpenFace 2.0 may be helpful for analysis or may perform well on controlled datasets, accuracy can be impacted when obtaining facial expression data collected in “in-the-wild” contexts and situations where there may be changing conditions within the video (e.g., changing background lighting, face or body movement occurrences, head angle adjustments in the video).

For more information on the AU balanced accuracy and ROC-AUC comparison across AFFDEX, LibreFace and OpenFace for individual action units, have a look at our whitepaper for the breakdown across all AUs.

Why it matters to invest in a Facial Coding AI toolkit

Whether you are testing advertisements, conducting academic research in a lab, or building features for automotive safety – having a toolkit that can give you accurate results and comprehensive insights is integral to your study’s success.

While free solutions can be valuable contributions to the research community, our findings show that AFFDEX 2.0 remains the superior solution, suitable for large-scale applications requiring high generalizability and precision across different facial behaviors.

While face detection and accuracy rates are important factors to evaluate the strength of a facial coding AI tool, another important point to touch upon is how the data is outputted. Affectiva’s signals provide 0-100 likelihood scores for AU presence whereas OpenFace 2.0 and LibreFace open-source toolkits provide solely binary presence labels and continuous intensity estimates ranging from 0-5.

Depending on your research need and how granular you want to get with the data (such as capturing micro expression movements), having a raw signal scale from 0-100 provides an additional level of granularity not offered by other solutions in the market. And for those who are interested in thresholding and are users of the iMotions Facial Expression Analysis module in iMotions Lab, we do offer R-Notebooks that enable thresholding and aggregation of AFFDEX 2.0.

Interested in learning more about Affectiva’s facial coding AI? Schedule a demo with our team!

Get a Demo

We’d love to learn more about you! Talk to a specialist about your research and business needs and get a live demo of the capabilities of the iMotions Research Platform.